Building with AI at Myriade: Intent and Proofs

Last Friday, two of us shipped 17 PRs.

That isn’t a brag — it’s the setup for everything that follows. Shipping quickly with AI inside a serious product (we build data infrastructure that runs on customer warehouses) is only possible if you’ve thought hard about what replaces the safety nets you used to lean on.

The “vibe code” meme — 40 features a day, no tests, no review — works fine for throwaway demos. It falls apart the moment the code has to run in production against a client’s Snowflake account. So the question we’ve been working through at Myriade is: how do you actually benefit from AI in a complex, critical environment?

Here’s where we’ve landed.

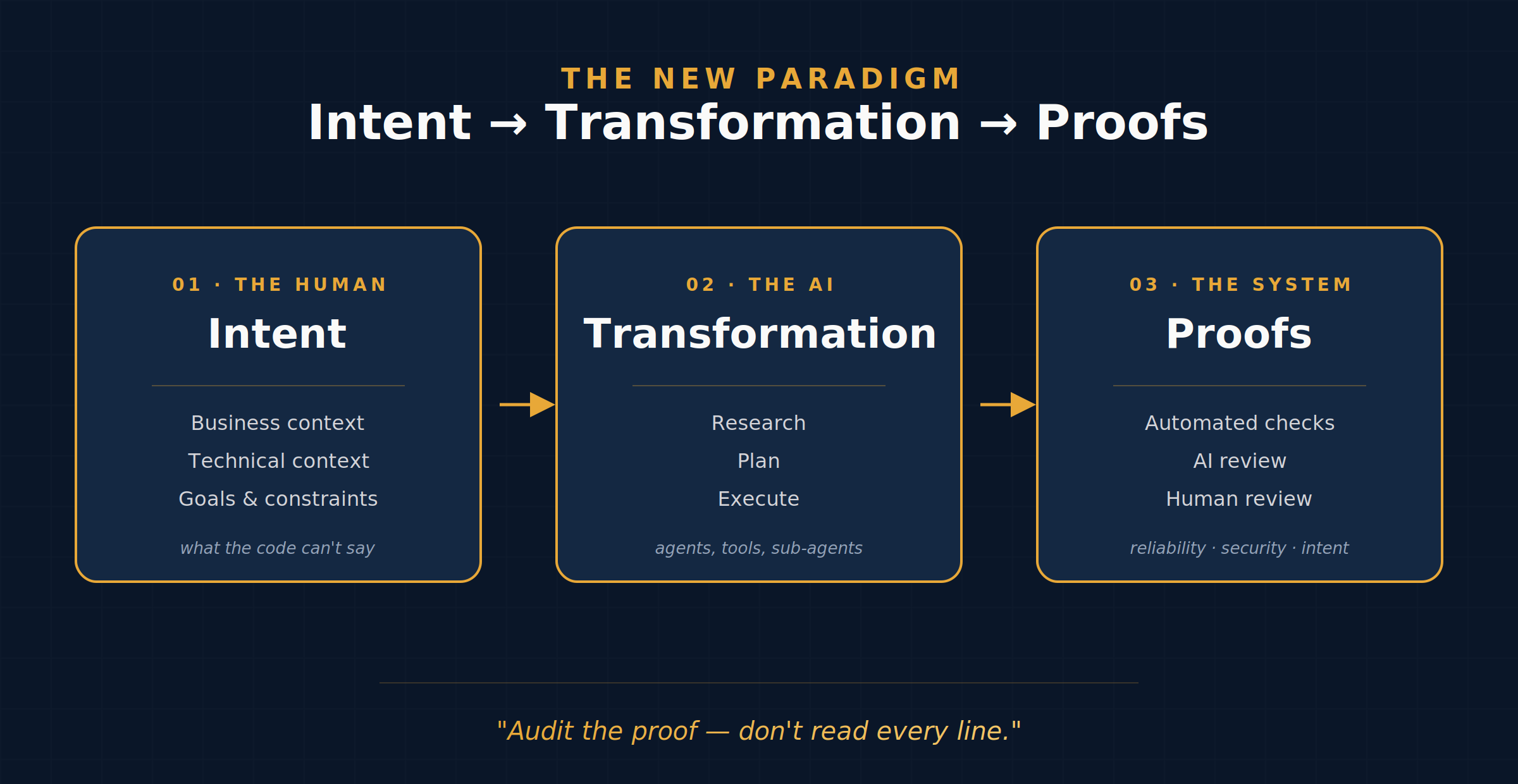

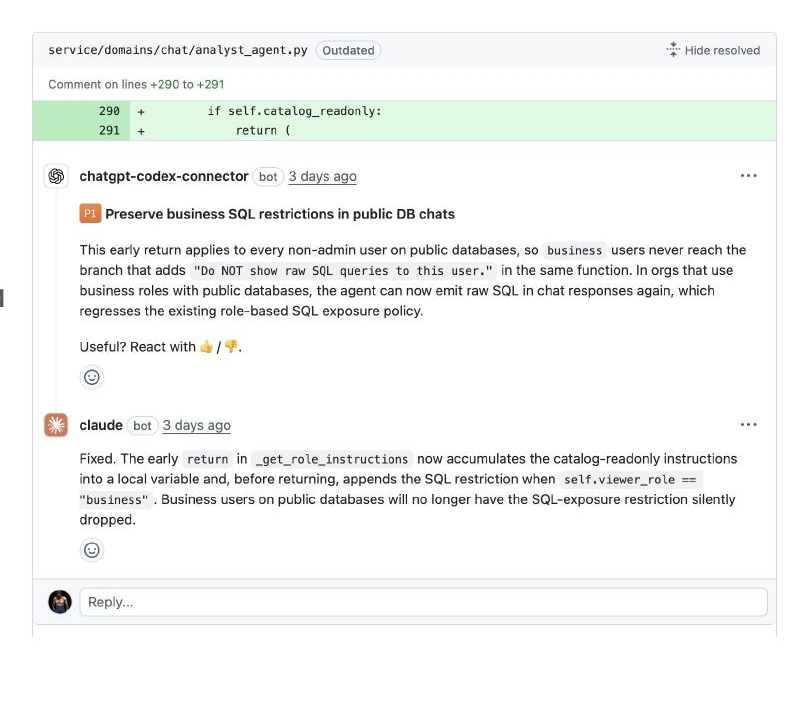

The new paradigm: Intent → Transformation → Proofs

Writing code used to be the hard part. Now the hard parts are on either side of it.

- Intent. What, exactly, are we asking the model to do? What’s the business context, what constraints must the solution respect, what does the code not say that the model needs to know?

- Transformation. The AI does the work — research, plan, execute.

- Proofs. How do we know it worked? Automated checks, AI review, human review. The human’s job shifts from “read every line” to “audit the proof.”

This reframes where we spend our time. Before: typing. After: getting intent right, and getting proofs right.

Intent at Myriade

We try to give the model what we’d give a new hire:

- Business and technical context, captured in

agents.mdand friends — the stuff the code itself can’t tell you. - Feedback loops that surface what’s actually happening in the product — Sentry for errors, Jam.dev for UX bugs.

- An honest answer to the question “what’s the weight of this product?” — tech debt, in other words. AI scales whatever’s underneath it.

And probably the most underrated part: choosing carefully what to build. The cost of building the wrong thing has dropped, which means the cost of deciding the wrong thing is now the bottleneck.

Transformation at Myriade

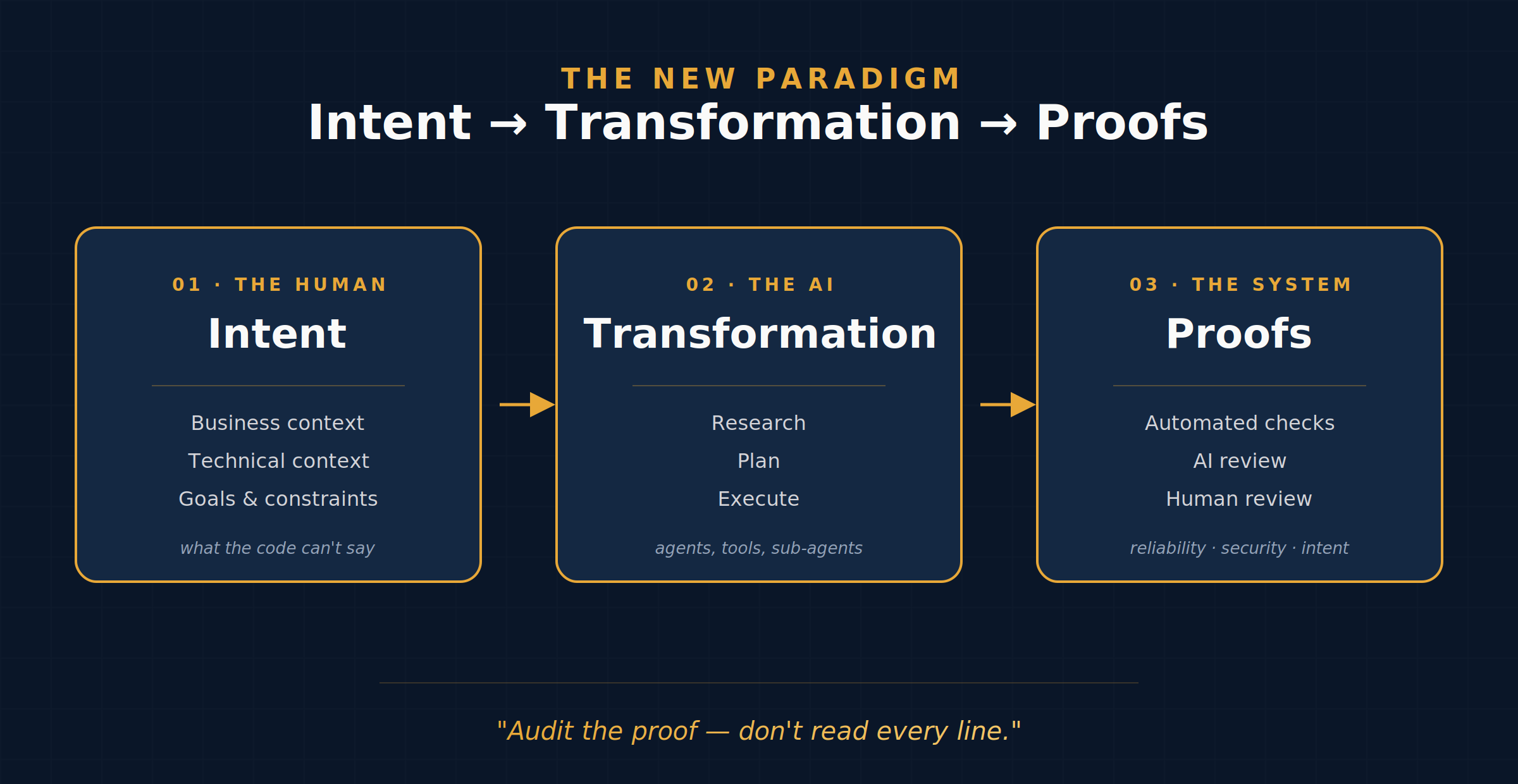

We live mostly on the right half of the AI dev tooling spectrum — interactive agents and cloud agents, not autocomplete.

We start simple and layer in tools as the task demands:

- Claude Code or Codex — CLI for interactive work, cloud sessions for bigger autonomous PRs.

- Plans for anything complex or that will run unattended.

- Sub-agents and Skills to encode recurring patterns (design system, feature flags, MCP integrations).

- MCP servers so the agents can actually do things: Linear for issues, Sentry for bugs, Betterstack for logs, our own Myriade MCP for data.

- Worktrees so multiple agents can run in parallel without stepping on each other.

Julian, one of our engineers, runs up to four CLI tabs at once and queues cloud sessions for the bigger tasks. His loop: Explore → Plan → Refine → Execute.

Proofs at Myriade

This is where most teams under-invest, and where the wheels come off.

Pre-review. Pre-commit hooks catch formatting, linting, type errors, broken tests before the AI thinks it’s done. We also give the agent an unauthenticated path into the app so it can verify its own UI changes.

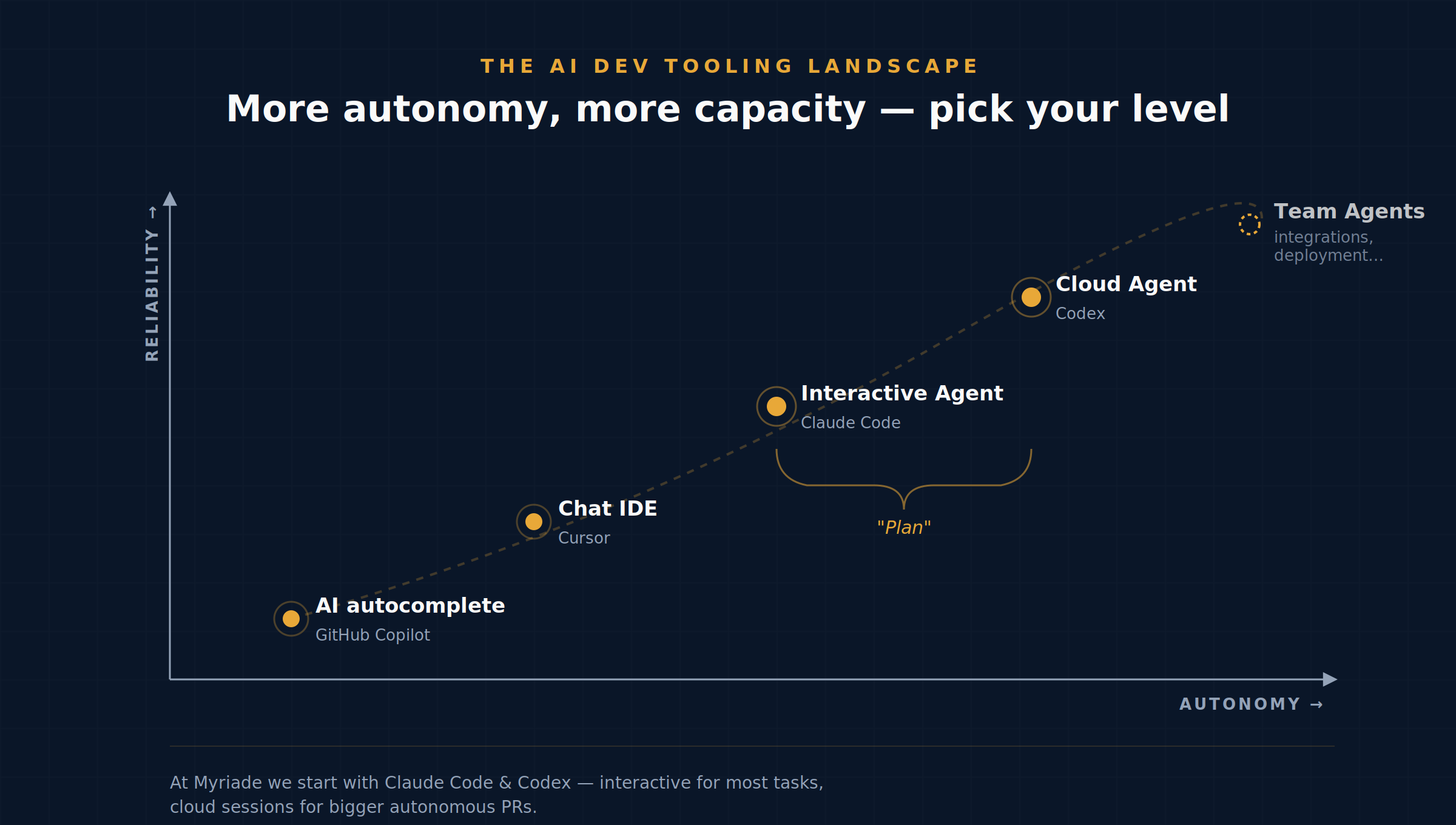

AI review. We use a different model to review than to write — Codex reviews, Claude adapts (or vice versa). The trick is passing the intent to the reviewer along with the diff; otherwise it’s just nitpicking.

Human review. Only where it earns its keep. Our heuristic:

- Frontend changes → preview environment, eyeball the UI, merge.

- Backend changes → review where it matters.

- Migrations, DB schema, core code → ⚠️ always.

- Agentlys, our AI library → every line.

To keep human review cheap, we invest in the things that make it cheap: Fly.io preview environments, realistic seed data, and a culture of attaching a short Gif or video to the PR. Five seconds of watching the feature work beats five minutes of reading the diff.

A few things we’ve learned

AI doesn’t fix broken processes — it scales them faster. Before pouring AI into a workflow, make sure you actually understand your tools, the backlog is clean, ownership is clear, and priorities are right. Otherwise you’re just making the mess arrive sooner.

Pick your model carefully. Claude Code is our best ROI right now. For budget work, OpenRouter with Kimi or Minimax is a reasonable fallback.

Double down on the basics. Simple names, strong type systems (TypeScript, Rust), real tests. Every guardrail you add for humans is now a guardrail for the AI too.

What we think this means

The teams that win will be the ones that redesign their system to get the most out of AI — not the ones that just bolt an agent onto the side.

Deciding what to build matters more than ever. Building the wrong thing faster is still building the wrong thing.

And in a world where engineering accelerates, the “rest” — marketing, sales, distribution — gains weight. Which, for those of us building tools for the people downstream of engineering, is an interesting thing to sit with.